Two approaches to mixed methods impact evaluation – new pre-publication paper

| 13 October 2023 | QuIP Articles

Nearly everyone is in favour of mixed method impact evaluation (MMIE), but that doesn’t make it easy to do well. Indeed, it’s often not even that clear what MMIE means. We all know it has something to do with combining ‘quant’ and ‘qual’, but are we talking about tasks, tools, techniques, methods or approaches? Having now conducted more than seventy QuIP studies ourselves in a wide range of contexts, many very consciously mixed with other approaches, what general conclusions can we at Bath SDR draw about mixed methods, beyond saying that it comes in at least 57 varieties? James Copestake explores this in a new discussion paper titled ‘Mixed methods impact evaluation in international development: distinguishing between ‘quant-led’ and ‘qual-led’ approaches’.

The paper does four things:

First, it reaffirms the distinction between two very different approaches to making causal statements. Variance based approaches operate by establishing statistical correlations between interventions and outcomes – e.g. by comparing treatment and control groups with random allocation of ‘cases’ between them so that the control group approximates (as far as possible) to being a counterfactual of what would have happened to those ‘treated’ had they not been. Process theory approaches, like the QuIP, learn about the effects of an intervention from the verbal accounts of causal pathways and connections from those who directly experienced it.

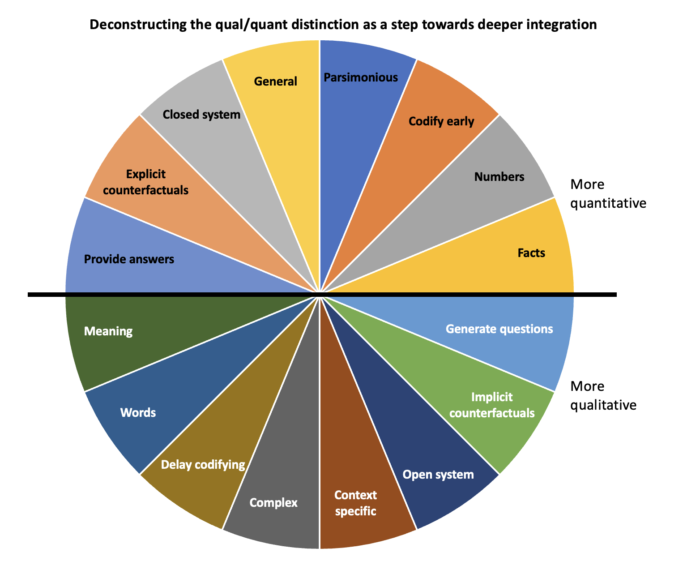

It is tempting to add that the first approach relies on quantitative methods and the second on qualitative methods, but it is not quite a simple as that. For example, the conceptualisation that underpins selection of indicators or variables for a variance-based IE is often qualitative, while generalisation from a QuIP is easier if it can draw on quantitative data about how those interviewed compare with the wider population of those affected. A second contribution of the paper is to provide a framework for more fine-grained analysis of different ways in which quantitative tasks (based on early and precise codification of data) and qualitative tasks (such as synthesising quantitative data by turning it into a picture) interact with each other through the process of designing an MMIE, collecting data, analysing and using it.

Third, the paper draws on secondary literature and experience with QuIP to distinguish between two very different kinds of MMIE which are labelled ‘quant-led’ and ‘qual-led’. Quant-led MMIE relies primarily on variance-based attribution to make precise estimates of the impact of an intervention, such as how men’s participation in doing household chores is affected by participating in a gender empowerment programme. This was indeed one finding from a study in rural Malawi, which combined a randomized controlled trial (RCT) with several rounds of QuIP studies that aimed to deepen understanding of the causal mechanisms and contextual factors behind the headline RCT results. In contrast, Qual-led MMIE aims to generate evidence to inform the continuous causal judgements that lie behind adaptive management of interventions. This can be quite opportunistic – using a bricolage of methods that respond to new challenges and questions as they arise and that challenge the thinking/theory behind the intervention (Aston & Apgar, 2022). This approach tends to be more process-theory based, but it is also greatly aided by quantitative monitoring of programme inputs, context, and outcomes. For example, QuIP studies have now been used in several rounds of evaluation of the UK Home Office’s Safer Streets Fund, both drawing on wider survey data to identify informants, and contributing to revisions in its theory of change that pointed towards use of new indicators.

Fourth, the paper seeks to promote better understanding of the strengths and weaknesses of both these approaches to MMIE in relation to different kinds of intervention and different evaluation purposes. It suggests that the balance of arguments for and against different approaches also depends on the priorities, interests, and path-dependent preference constraints of MMIE commissioners and producers. We are all inclined to favour approaches with which we are more familiar, hence it is not surprising (for example) that realist evaluation is neglected by econometricians who have never been exposed to it. Discussion of MMIE is also partly distorted by better standards of publication of independent stand-alone quant-led MMIEs than qual-led MMIE, which tends to be more diverse, fragmented and integrated into programme management. To address this, standards for process-theory led methods need to be strengthened, and commissioners should be more open and upfront in publishing independent studies that conform to them. More attention is also needed to discussing the relevance and sufficiency of findings, and how discrete studies related to wider adaptive management cultures that address complexity by drawing on evidence from multiple sources.

This discussion points to two other possibilities for progress. First, while quant-led MMIE has become better at recognising the complementary role that process theory-based methods – like contribution analysis, process tracing, outcome harvesting, and the QuIP – can play alongside variance-based evaluation, it can benefit from giving qualitative specialists more say in framing and design of MMIE, allocating them larger budgets and giving them more space in presentation of findings. Second, qual-led MMIE can go further in building process theory-based IE on strong quantitative monitoring of outcomes, being open to the possible value of “realist trials” (Neilsen et al. 2023) and using findings from multiple sources to inform modelling and simulation.

References

Aston, T., Apgar, M. (2022) The art and craft of bricolage in evaluation. Brighton: Centre for Development Impact Practice Paper, No.24. www.idea.acv.uk/cdi

Copestake, J. (2023) Mixed methods impact evaluation in international development: distinguishing between ‘quant-led’ and ‘qual-led’ approaches. Bath: Bath SDR.

Neilsen, S., Jaspers, S., Lemire, S. (2023) The curious case of the realist trial: methodological oxymoron or unicorn? Evaluation, 1-18.

Comments are closed here.